Key Takeaways

- A review of public disclosures indicates that governance of Artificial Intelligence (AI) at U.S. companies remains at a formative stage. This stage is characterized by limited transparency, uneven institutionalization, and concentrated leadership among a subset of firms.

- Disclosure of AI oversight and formal AI policy frameworks are the exception rather than the norm. Out of 3,048 U.S. companies across the Russell 3000 and S&P 500, only 245 (8%) disclosed board-level oversight of AI. Further, only 275 companies (9%) acknowledged having established policies on AI.

- Board-level AI skills also remain scarce across the broader market. Of the 3,048 companies, 481 (16%) disclosed the presence of at least one director with specialized AI skills.

- Where AI-related policies, oversight, or board-level skills are present, they tend to be concentrated in five sectors: Consumer Discretionary, Financials, Health Care, Information Technology, and Industrials.

- These findings suggest that AI deployment is outpacing governance adoption, creating a structural gap between technological adoption and risk oversight.

Introduction

Artificial Intelligence is widely recognized as a transformative general purpose technology reshaping how we live, work, create, communicate, and learn. Organizations are rapidly adopting AI tools to drive efficiency, innovation, and performance. According to the 2025 AI Index Report from Stanford University,

In 2024, U.S. private AI investment grew to $109.1 billion—nearly 12 times China’s $9.3 billion and 24 times the U.K.’s $4.5 billion. Generative AI saw particularly strong momentum, attracting $33.9 billion globally in private investment—an 18.7% increase from 2023. AI business usage is also accelerating: 78% of [global] organizations reported using AI in 2024, up from 55% the year before.

The recently released 2026 edition of What Directors Think by the Corporate Board Member comments, “Artificial intelligence has become the connective tissue running through virtually every strategic priority, risk concern, and governance challenge facing boards.”

Clear accountability through defined roles, policies, and controls ensures AI-enabled decisions remain traceable and aligned with corporate strategy, legal obligations, and fiduciary duties. Without clear oversight, accountability structures, and controls, AI introduces material financial, legal, and reputational risks that may directly affect long-term shareholder value.

While corporate adoption of AI is accelerating, though, the governance infrastructure required to oversee it remains in a nascent stage. Current data indicate a critical gap between technological capabilities and risk management. This article examines the current state of AI governance maturity in the United States, using the ISS Governance QualityScore to analyze 3,048 companies across the Russell 3000 and S&P 500 to identify early leaders and highlight gaps relevant to asset managers and stewardship teams.

ISS Governance’s own analysis of shareholder proposals, as presented in A Look at AI-Related Shareholder Proposals at U.S. Companies, 2022-2025, indicates the growing presence of Artificial Intelligence in the proxy voting landscape. Concentrations of shareholder proposals related to AI largely appear on ballots for large U.S. technology and e-commerce companies, similar to how AI-related corporate disclosures are concentrated among those companies. However, not all such companies with AI-related shareholder proposals effectively provide shareholders with assurances of their AI governance.

One particular focus of AI-related shareholder proposals is on data centers and these centers’ growing energy usage, capital expenditure, and climate impact. Corporate integration of Artificial Intelligence, as well as Media coverage of the technology, continue to be focal points for U.S. shareholders. Governance considerations related to AI may expand in the future, and those companies failing to establish such structures may fall behind.

Sectoral Concentration of AI Board Oversight

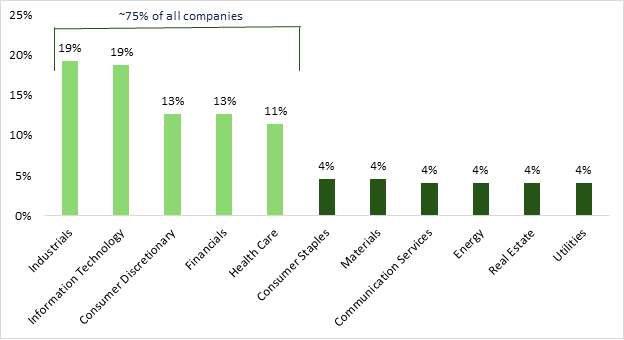

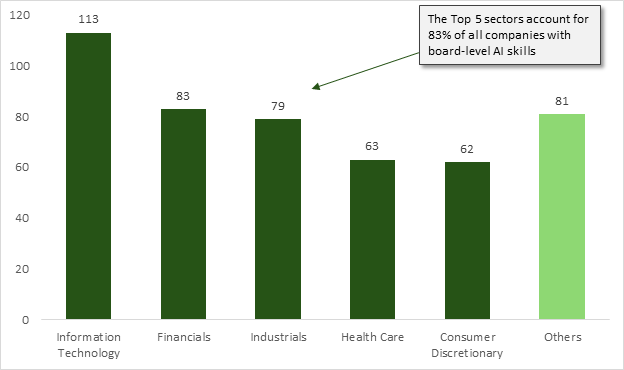

AI board oversight remains limited in scope and highly concentrated across a small number of sectors. Only 245 companies out of the 3,048 analyzed disclose the existence of board-level oversight of AI, indicating that such governance structures are still far from standard practice in U.S. public markets (Figure 1).

Figure 1: Percentage of Companies That Have AI Board Oversight by Sector

Note: The figure covers 245 companies. Percentages do not add up to 100 due to rounding.

Source: ISS Governance QualityScore, January 2026

The top five sectors—Industrials, Information Technology, Consumer Discretionary, Financials, and Health Care—collectively represent nearly 75% of all disclosed instances of AI board oversight, demonstrating that governance practices remain narrowly distributed rather than broadly embedded across the corporate landscape. The pronounced clustering among Industrials and Information Technology suggests that oversight adoption is being driven primarily by sectors with either high operational automation intensity or direct exposure to digital technologies.

This uneven adoption pattern points to an emerging two-tier governance structure, where a limited group of early adopters are formalizing oversight mechanisms while many other firms remain at informal or underdeveloped stages. The finding suggests that AI governance is currently sector-led rather than market-led, with institutionalization occurring in response to operational exposure rather than as a generalized governance norm.

From a risk perspective, this concentration raises questions about systemic preparedness, consistency of oversight standards, and the scalability of governance frameworks as AI deployment continues to expand across sectors.

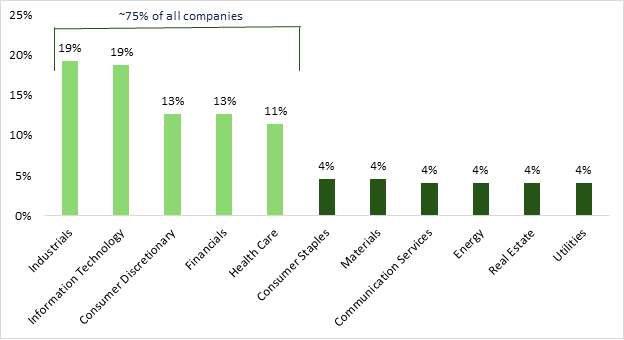

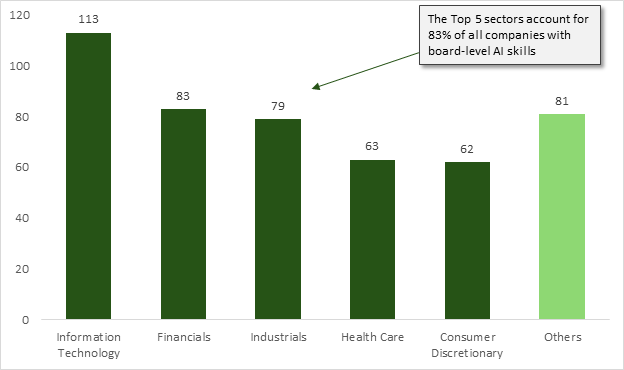

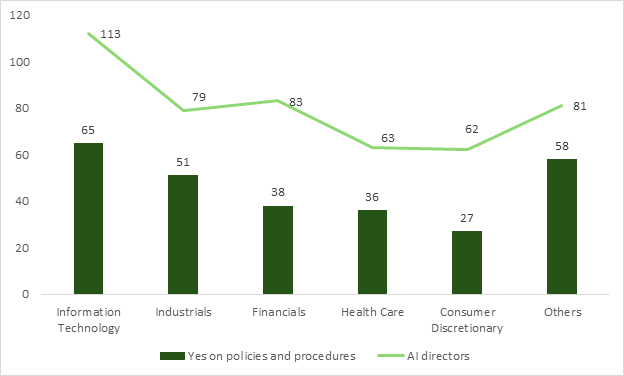

Concentration and Depth of Board-Level AI Skills

Like board oversight, AI technical skills are highly concentrated within a narrow subset of industries. Of the 3,048 companies reviewed, only 481 organizations (16%) disclosed the presence of at least one director with specialized AI skills. Within these companies, five sectors account for 83% of all AI-skilled boards, suggesting that governance maturity is siloed in industries where AI is viewed as a primary operational driver (Figure 2).

Figure 2: Concentration of AI Skills by GICS Sector

Note: The figure covers 481 companies.

Source: ISS Governance QualityScore, January 2026

Further, among the five top-performing sectors, only 128 (4%) have moved beyond a single “lone expert” to seat two or more directors with AI skills. This “leader” group exhibits even sharper sectoral clustering, with over half of these multi-expert boards residing in Information Technology (32%) and Industrials (19%).

This data highlights a dual risk: a vast “skills gap” in laggard sectors and a “key-person risk” in the many companies relying on a single voice for oversight. The findings suggest that while technical leadership is solidifying in tech-heavy and industrial fields, many companies have yet to include fluency at the board level.

The prevailing landscape is consequently defined by a sharp divide between a small group of “AI-fluent” leaders and a vast majority of boards that lack the skills and talent required for complex AI risk assessments. The uneven distribution underscores a critical mismatch between the rapid, cross-sector deployment of AI and the slower concentrated evolution structure meant to oversee it.

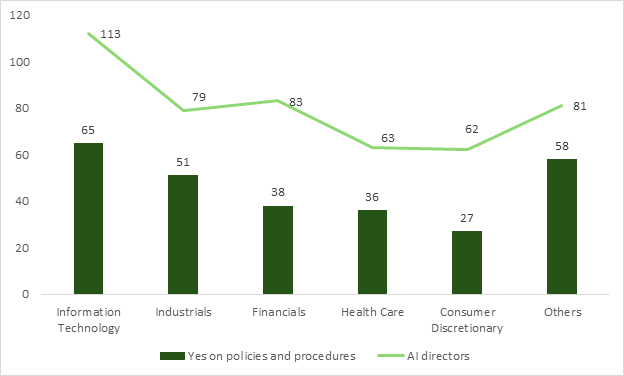

Establishing Formal Guardrails: Analysis of AI Policies and Procedures

Consistent with the findings above, the data regarding formal AI policies and procedures confirms that governance infrastructure is limited. Within approximately 3,048 companies in the analysis, roughly 9% confirmed the existence of established policies for the development, deployment, and monitoring of artificial intelligence. This low rate of affirmative disclosure suggests that for most of the Russell 3000 and S&P 500, AI integration is currently occurring in a policy vacuum, potentially exposing organizations to significant ethical, legal, and operational risks.

Mirroring the trends seen in board skills, these governance frameworks are heavily concentrated in the select group of top five sectors. This distribution indicates a “governance lag,” where AI use cases are expanding rapidly despite the absence of formal guardrails. The data implies that most companies are currently managing AI through ad-hoc processes rather than the standardized traceable procedures required for robust fiduciary oversight.

Within the five leading sectors, the presence of AI policies and procedures is positively correlated with the presence of board members with AI skills. Nevertheless, a striking “readiness gap” exists within the leading sectors, indicating that the presence of an AI-skilled director does not inherently guarantee the existence of formal corporate policies (Figure 3).

Figure 3: Gap between Board AI Skills and Formal AI Policies

Note: The figure covers 275 companies (for policies and procedures) and 481 companies (for AI directors).

Source: ISS Governance QualityScore, January 2026

This discrepancy suggests that many “AI-fluent” boards are currently operating without the standardized, traceable frameworks necessary to translate individual skills into institutionalized risk management.

This lack of institutionalized policy creates a critical “transparency gap” for investors seeking to assess how companies mitigate algorithmic bias or ensure data privacy. Consequently, the presence of formal AI policy has been a primary differentiator of “governance leaders” in the current 2026 landscape. Until these policies permeate beyond a tech-centric minority, the broader market remains structurally unequipped for the long-term oversight of general-purpose AI.

The Concentrated Landscape: Observational Trends in AI Maturity and Board Preparedness

The significant concentration of both AI skills and board oversight in the “Top Five” sectors suggests that AI governance is currently being treated as a specialized industry concern rather than a systemic, market-wide priority. This creates a noticeable “governance vacuum” in sectors such as Energy, Utilities, and Materials, where the rapid integration of AI into physical infrastructure may be outpacing the technical fluency of their boards.

For investors, the data reveals a “Lone Expert” vulnerability, where the oversight of high-stakes technology often rests on a single director rather than an institutionalized committee structure. Further, the persistent gap between the presence of AI-skilled directors (16%) and the adoption of formal AI policies (9%) suggests that many organizations have the personnel for oversight but have yet to codify the procedures required for consistent, traceable risk management. This discrepancy offers a specific entry point for stewardship teams to engage with companies on the transition from “individual fluency” to “institutional guardrails.”

Observationally, the lack of broad-based disclosure indicates that the current landscape is defined by uneven maturity, making cross-sector benchmarking difficult for diversified portfolios. Consequently, the primary opportunity for investor engagement lies in probing the depth of the oversight and the rigor of the monitoring policies, particularly in sectors where the data shows a high density of deployment but a low density of formal governance structures.

As the 2026 U.S. proxy season begins, Artificial Intelligence will be both under scrutiny by shareholders and targeted for expansion by corporate entities. In line with prior governance topics, such as Climate and Information Security, the concentration of policies, procedures, oversight, and skills may rapidly expand beyond the largest, highest-tech companies and permeate throughout the market. Investors may well closely monitor which companies are leaders and laggards in AI governance.

By:

Hinza Zeru, Vice President, Governance QualityScore, ISS STOXX

Andrew Schultz, Head of Governance Data Solutions, ISS STOXX